As the world moves toward a cashless financial system and ultimately the Mark of the Beast to validate identities (see The Identification Problem), a significant new technology has been developed that will partially solve the problem of identity fraud. This invention is so important that it may also represent one of the last stages in the evolution of Electronic Funds Transfer (EFT) before the Mark arrives. In no case is the merging of microprocessors and credit card technology better seen than in the development of what has become known as the smart card. Originally a French invention made in 1974 by Roland Moreno, this major advance in credit and debit card technology is proving to be the answer to making the cashless society a reality while reducing the risk of electronic theft.

As the world moves toward a cashless financial system and ultimately the Mark of the Beast to validate identities (see The Identification Problem), a significant new technology has been developed that will partially solve the problem of identity fraud. This invention is so important that it may also represent one of the last stages in the evolution of Electronic Funds Transfer (EFT) before the Mark arrives. In no case is the merging of microprocessors and credit card technology better seen than in the development of what has become known as the smart card. Originally a French invention made in 1974 by Roland Moreno, this major advance in credit and debit card technology is proving to be the answer to making the cashless society a reality while reducing the risk of electronic theft.

By the late 1970s, companies were beginning to create methods for using secure microcontrollers and integrated circuits placed within cards for cashless operations in commerce. By 1984, the first trials of smart cards for use in Automated Teller Machines (ATMs) were completed in Europe and met with enthusiastic success. In those early days of smart card development, Robert McIvor writing for Scientific American said,

“Within a year it may be possible to carry much of the power of a personal computer in one small compartment of a billfold. It will reside in a device called a smart card: one or more microelectronic chips mounted in a piece of plastic the size of a credit card” (Scientific American, Nov. 1985, p. 152).

Over the next 25 years, advances in electronic technology made circuitry become ever smaller and denser, while the computing power of microprocessors grew almost exponentially (see The Cashless Society). For instance, the science of microelectronics has shrunk the size of a computer down from stationary, room-sized behemoths weighing thousands of pounds, to portable devices such as smart phones that you can easily carry in your pocket. In addition, the computing power of microprocessors has grown from performing at most thousands of calculations per second to performing up to trillions of calculations per second. Processors today are capable of rendering advanced graphics, high resolution video, or virtually any other processing function imaginable.

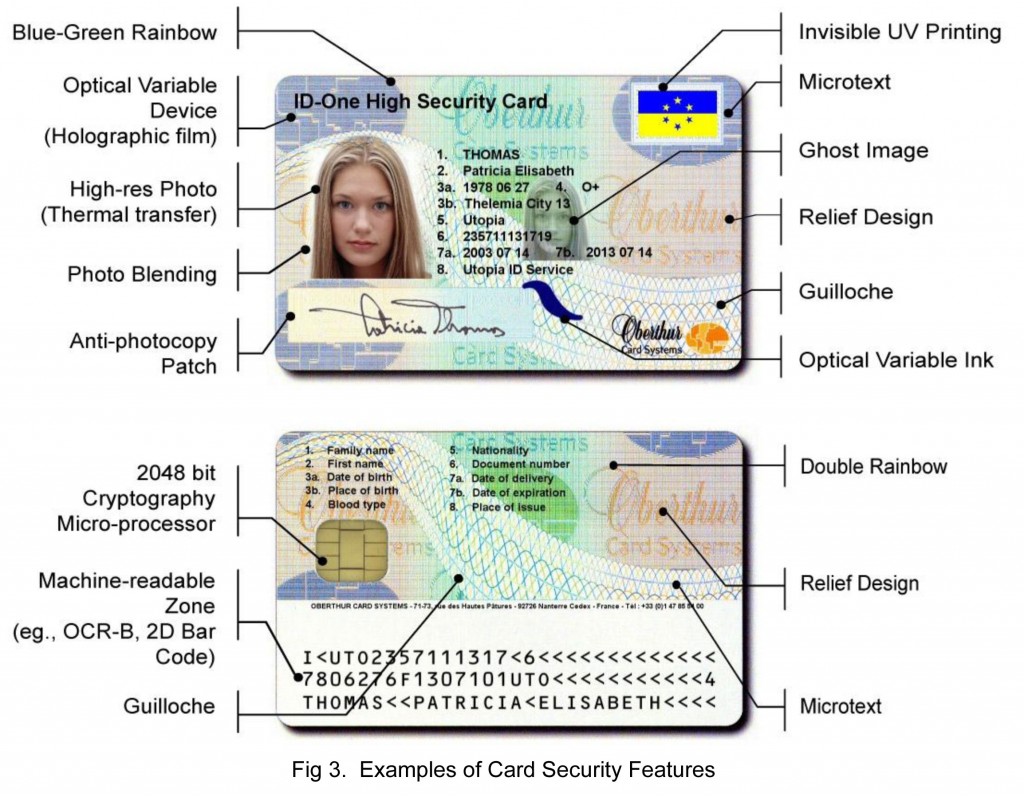

In the mid-1980s, the microprocessor chip and the credit card were successfully merged into what became known as the smart card. Concurrent with the same electronic revolution that had given rise to the central processing unit (CPU), the birth of the smart card was the equivalent of a computer sandwiched within the thin plastic layers of an otherwise normal looking credit card. Within each smart card were placed one or more silicon chips which were each less than eleven-thousandths of an inch thick. Etched on the chips’ surface was the electronic circuitry necessary to transform plastic money into an intelligent and programmable instrument of commerce [read full article].

Subscribe to End Times Truth

Subscribe to get notified when new articles or posts are uploaded. Always keep updated on the latest news in End Times prophecy!